Review cadence that keeps Data & AI pilots honest

- 14 May 2026

- In Blog, Delivery

- ~7 min read

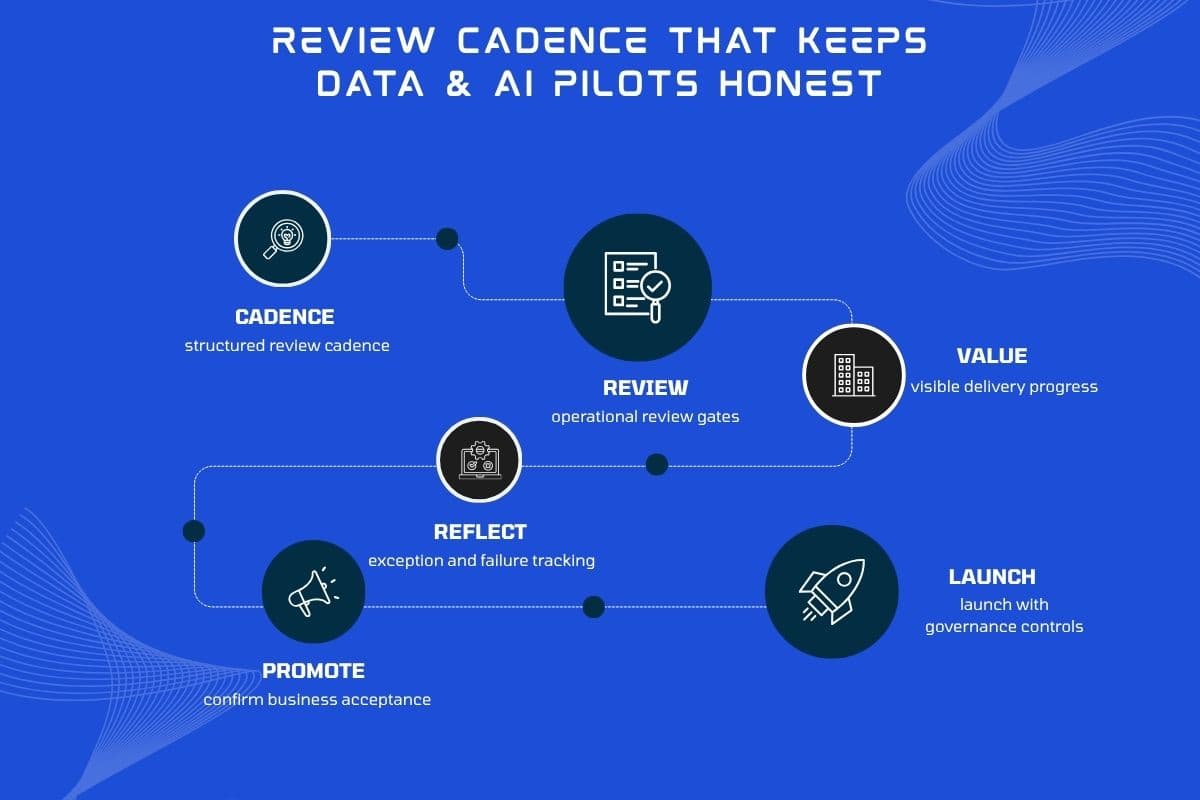

Data and AI initiatives fail in two predictable ways: steering theatre (beautiful decks, nothing runnable) and quiet drift (engineering ships, operations never adopts). A steady review rhythm fixes both — if each session shows real flows on real data shapes in a named environment, with acceptance written in language operations recognises.

Cadence: weekly or fortnightly, never “when ready”

Fortnightly is fine for mature teams; “when we have something to show” becomes never. Lock a 45-minute slot. If the slot is empty twice in a row, treat that as a delivery risk, not a calendar win — something is too large or dependencies are unclear.

What belongs on the agenda

- One slice of value — e.g. “triage these five ticket types,” “answer these internal FAQs with citations,” “sync this object nightly with conflict rules.”

- Exceptions in plain sight — show the top three failure modes from the last week: missing fields, ambiguous policy text, auth edge cases.

- Audit trail — for anything that writes to CRM or sends mail, show what was decided, by which rule, and what a human can override.

Written acceptance beats vibes

Each slice ships with 5–10 checkboxes a business owner can tick: inputs, outputs, error handling, and who gets notified. New ideas go to a backlog with a rough size before they displace the current slice. That keeps scope honest without muting good feedback from the floor.

Who needs a seat

Core trio: operational sponsor (can say no), technical lead, and someone who lives the workflow daily. Rotate compliance or IT security when access, retention, or logging is on the table — not every week.

If leadership only sees the pilot at go-live, you have not run a pilot — you have run a bet.

Red flags

- Demos that only work on a developer laptop or anonymised toy data.

- No shared staging tenant that mirrors production permissions.

- Success defined as “model accuracy” without a business outcome metric.

How we work with you

We stage delivery with visible environments and review gates tied to your programme shape. For workflow-heavy builds, see operational apps & integrations and knowledge base work.

Design your review rhythm

Tell us about stakeholders and systems — we will propose a cadence, environments, and acceptance pattern that fits your pilot.